BPO operations are built for constant change. Contracts ramp quickly, headcount fluctuates, and compliance expectations never ease, all while margins remain under pressure. Yet one area that underpins all of this is still rarely treated as strategic infrastructure: how devices are provisioned, managed, and recovered at scale.

For many BPOs, device management sits in the background. It is often fragmented across internal IT teams, multiple suppliers, and manual processes that have evolved over time. While this may work at smaller volumes, it quickly becomes a constraint as operations scale.

When Devices Slow Performance

In high-velocity BPO environments, time is directly linked to revenue. Delays in device onboarding mean agents cannot go live, training investment is underused, and programmes lose momentum. Offboarding creates equal risk. High churn and contract changes mean devices leave the estate constantly, and without tight controls this exposes data, compliance, and asset recovery issues.

Alongside risk, cost quietly increases. Idle devices, unnecessary new purchases, repeated configuration work, and reactive support all erode margins, often without being visible as a single problem.

Why Traditional Models Fall Short

Many BPOs still rely on capital-heavy purchasing or piecemeal provisioning models that were never designed for workforce volatility. Buying new devices for each ramp-up ties up capital and leaves surplus stock when demand drops. Limited asset visibility makes it difficult to track usage, ownership, and compliance, especially as remote and hybrid delivery models expand.

A Managed, Circular Alternative

An increasing number of BPO providers are adopting a fully managed, circular IT and asset management model. This approach treats devices as operational infrastructure rather than one-off purchases. Devices are pre-configured, securely deployed, supported in-life, rapidly recovered, refreshed, and redeployed based on forecast demand.

The operational impact is clear:

- Faster onboarding with ready-to-deploy devices

- Reduced risk through controlled offboarding and certified data security

- Lower cost per agent by maximising reuse and limiting capital spend

- Improved productivity through consistent configuration and support

- Measurable ESG benefits through extended device life

Turning a Constraint into a Lever

When device management is treated strategically, it stops limiting growth and starts enabling it. Onboarding accelerates, offboarding becomes predictable and auditable, and costs align more closely to active headcount rather than peaks and troughs.

In a market where speed, compliance, and efficiency define success, rethinking device management is no longer optional. A modern, circular approach to IT and asset management is becoming a foundational capability for BPOs looking to scale without adding risk or complexity.

Want to know more?

AI regulation is no longer just a tech or compliance issue, it’s becoming a boardroom priority.

In the US at a federal level the government seems to be actively opposed to AI regulation and in the UK, despite an interesting Private Member’s Bill, there’s no sign of any overarching AI law. But while the US and UK are still debating their approaches, the EU is ahead of the game with the world’s first comprehensive AI law: the EU AI Act. If you do business in or with Europe, this will affect you.

Why Should You Care?

No EU presence? Doesn’t matter. If you have EU customers or suppliers, you’ll likely be contractually required to meet the Act’s standards

Remember GDPR? The EU’s data privacy rules became the global benchmark. Expect the AI Act to have a similar impact

The Risk-Based Framework: What’s In, What’s Out

1. Unacceptable Risk: Banned

- Social scoring, manipulative AI, and biometric categorisation based on sensitive traits are prohibited

- Watch out: Using “black box” AI for things like fraud prevention or dynamic pricing could put you at risk

2. High-Risk AI: Strict Controls

- Applies to recruitment, education, healthcare, credit scoring, policing, and safety-critical infrastructure

- Requirements: Detailed risk assessments, transparency, human oversight, and conformity checks before launch

- Don’t assume you’re exempt: Even apparently innocuous recruitment screening tools could be caught by these rules

3. General-Purpose & Generative AI: New Obligations

- Foundation models (like ChatGPT or image generators) must ensure transparency, label AI-generated content, manage systemic risks, and clarify use of copyrighted data

4. Limited-Risk AI: Transparency Required

- Chatbots and similar tools must clearly inform users they’re interacting with AI.

- Heads up: Many bot providers still advise clients to hide from customers that they’re talking to machines —this will need to change

5. Minimal-Risk AI: Largely Unaffected

- Spam filters, video game AI, and similar tools are mostly out of scope

The Compliance Challenge

For UK and global businesses, the message is clear: even without local laws, EU standards will shape your obligations. Cross-border operations will face growing compliance pressure, just as they did with GDPR.

Balancing Innovation and Compliance

The real challenge? Staying innovative while meeting new regulatory demands. Businesses must:

- Identify which AI systems are in scope (which will include understanding exactly which parts of the business are using AI, to do what)

- Ensure transparency and risk management

- Be ready to demonstrate compliance to customers and partners

Need Help Navigating the EU AI Act?

At Customer Contact Panel, we help organisations find compliant, effective AI solutions—so you can innovate with confidence and accountability.

We’re caught between high expectations and uneven delivery. AI has the potential to transform contact centres, but only if implemented in a transparent, human-centred, and context-aware way. This article explores how consumer sentiment, operational strategy, and evolving AI technologies converge to reveal what works and what needs further improvement.

In our February 2025 whitepaper, Customer Contact Panel highlighted that this would be a ‘year of difficult conversations’ in which speed, automation, and empathy must be reconciled. AI can increase efficiency, but risks creating a sense of detachment if it isn’t matched with emotional intelligence. Interestingly, complementary research suggests people are more honest with AI when judgment is removed, particularly in sensitive domains such as mental health, financial support, or legal services. However, as the MaxContact report confirms, the majority of consumers still turn to voice when the stakes are high.

What Consumers Are Saying (And Why It Matters)

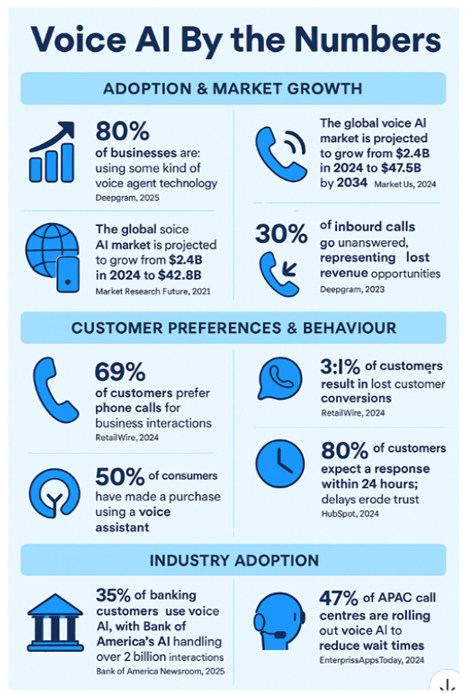

Voice AI by the Numbers — summarising adoption, customer preferences, and industry usage stats from the Synthflow whitepaper.

What does all this mean for CX leaders?

MaxContact’s survey found that 55% of people abandon calls due to long wait times, while 35% cite the agent’s lack of understanding. Complex account issues, payment negotiations, or emotional complaints are scenarios where empathy matters (and where automation often fails). The data reinforces what many CX leaders already sense: customers will accept AI for triage or routine tasks, but demand a human for anything nuanced.

Only 36% of respondents believe AI has improved their contact centre experience, and nearly 32% say it has made it worse. There’s a clear generational divide: 65% of 25-34 year-olds are comfortable with AI, but only 27% of over-55s feel the same. This generational lens is essential when planning AI and omnichannel strategies.

The core problem is bad AI, not AI itself. As noted in our earlier whitepaper, many AI deployments fail not due to technical limitations, but due to design and governance flaws. When AI is introduced without clear escalation paths, brand tone calibration, or decision traceability, customer confidence suffers. Mature solutions in the market now take a more human-aligned approach, creating AI agents that behave like brand-trained teammates, capable of recognising tone, understanding escalation logic, and respecting compliance frameworks.

Every decision should be traceable. Every transfer should carry context. These principles distinguish AI that scales from AI that stalls.

Omnichannel vs. Human-Centric: Getting the Balance Right

Consumers prefer voice support for immediate, emotionally resonant assistance. MaxContact’s research shows 60% view phone calls as the fastest route to resolution, far surpassing digital channels. Automation should manage repetitive tasks and noise, freeing up humans for high-value, high-empathy interactions. Smart triage, seamless handoffs, and transparent automation logic are crucial for omnichannel success.

Trust, Tone, and Transparency: Designing AI That Works

To address the most cited customer frustrations: poor escalation, limited response options, robotic tone – solutions must be designed with:

- Cultural and tone calibration

- Customisable escalation protocols

- Transparent audit trails

- Privacy-by-design aligned to GDPR and beyond

These aren’t technical ‘extras’, they are fundamental requirements in sectors where mistakes can harm trust, reputations, or wellbeing. In regulated or high-stakes categories such as healthcare, dating, or finance, the operational risk of misjudged automation is simply too high.

AI has advanced quickly, but trust remains fragile. Customers want efficiency, but not at the cost of clarity or empathy. The future of contact is digitally respectful, not just digital. The best AI solutions will pause, listen, and escalate when needed, not just answer fastest.

For contact centres navigating this balance in 2025, the opportunity lies in creating experiences that feel both seamless and human where AI takes the pressure off, but never takes over.

Of all the contact centre use cases for AI, Pure Voice AI is the most disruptive – and potentially the most transformative. Unlike Agent Assist or auto-wrap that augment human performance, Pure Voice AI replaces the agent entirely for certain interactions.

What is the AI doing in Pure Voice AI?

Pure Voice AI uses fully autonomous AI agents capable of holding spoken conversations with customers—with no human agent in the conversation. For an inbound call, the AI could triage the call, and if it can deal with the interaction itself, it doesn’t need to trouble a human agent. If the enquiry does need a human agent, it can monitor who’s available and route the call to the next best available agent.

Ultimately, the idea is that these AI agents can answer questions, resolve issues, and even handle sensitive interactions such as payment disputes or appointment scheduling.

It’s far more sophisticated than IVR (interactive voice response) trees or chatbots. Pure Voice AI uses advanced natural language understanding, real-time decisioning, and speech synthesis to hold dynamic, human-like conversations.

Key benefits: 24/7 service

The benefits case here is far less about cost reductions, agent productivity gains and optimisations, as use cases 1-6 have already delivered well here.

It is far more about providing round the clock service and enhancing brand experience. Because we all have lives, and work, that mean calling between set hours can sometimes be difficult. But the reason many contact centres are not 24/7 with human agents is because the business case of the cost and overheads – from staff costs to heating and lighting – doesn’t stack up.

Smoothing demand

Not only is calling at set times difficult, it creates spikes in demand, for example around lunch time or just after work. What’s more, pro-active outbound calls can also be scheduled for more customer friendly times of day.

Multi-lingual cover

Where a contact centre needs to serve multiple languages, there is typically a primary language that most human agents speak, with a handful of specialists available for secondary languages. Which means that those secondary languages are a scarce resource, both in terms of availability and recruitment. With Pure Voice AI in the mix, it can detect the language being spoken and switch seamlessly into it.

Implementation considerations

While everyone is trying to rush to this use case, without computer use, proper integrations, optimised and redesigned processes, there is no real opportunity to leap-frog to full voice AI. Because the foundations are simply not in place to support it.

What can we expect to see?

While not quite there yet, it is just around the corner, and there will undoubtedly be a proliferation of pure voice AI, especially for outbound. Though businesses should expect regulation to swiftly follow.

As we await the true potential of Pure Voice AI, it is a case of charting a path to how you achieve this in future, not the focus for today. Down that road lies complexity, risk and far greater likelihood of project failure. When you could be realising value right now and incrementally from use cases that build the maturity on which to develop pure voice AI. A far safer path to value on every front.

To find out more about how CCP can help you make the right technology choices, read more here or get in touch.

This series of articles is drawn from our webinar with Jimmy Hosang, CEO and co-founder at Mojo CX. We explored seven key use cases for AI in contact centres, starting from the easiest productivity gains to value generating applications. You can find a summary of all seven use cases here, or watch the webinar in full here.

What’s next? More of the same, that’s what! As we all know, that’s the nature of the regime; it’s here for keeps.

We know from the FCA’s reviews of firms’ mandatory Consumer Duty Board Reports that their initial assessment of the industry’s response to the requirements of Consumer Duty has been broadly “ok for starters, but you can try harder”!

The FCA’s update on its review of the Consumer Duty rules promised some simplification and removal of some arguably unnecessary, prescriptive requirements (in line with the Treasury’s ‘cut red tape’ agenda), but the range and depth of the permanent change in the treatment of customers that the FCA wants to see will remain.

This is to be expected and no doubt most firms are focused on the FCA’s specific callouts for Board Report improvements such as:

- Improving the quality of data – and the insights derived from it

- More fully reflecting the needs of different consumer cohorts, especially those with vulnerabilities

- Ensuring that boards are challenging – and seen to be challenging – the business to meet the Consumer Duty’s requirements

- Clarity on the timescale, action owners and data to drive planned improvements

But one further area for improvement will be particularly relevant to colleagues in the customer experience and/or contact centre space – “Comprehensive view across distribution chains”.

Yanking the chains

The FCA has long recognised the importance – and potential for the risk of service and experience failure – in distribution and supply chains. Many financial services organisations will have already had to review their supply chains to meet the FCA’s expectations around Operational Resilience.

Meeting the outsourced, sub-contracted and third-party challenge

In the context of Consumer Duty, though, the focus needs to be less on the dangers of total failure than the more subtle risks of poor visibility and exchange of information, and inconsistent consumer treatment and experience.

The way in which financial products and services are sold, delivered and supported can often involve multiple partners in the supply chain – covering sales, payments, customer service, claims, redemptions and other functions.

At nearly every point of the customer journey the way in which consumers are supported and interacted with, both through human-to-human dialogue and automated channels, creates a Consumer Duty risk.

Outsourced and sub-contracted relationships need to be managed to ensure that the standards of consumer data and insight; advisor training and empowerment; online and automated information and decision making; consumer recognition; fairness; and effective compliant recognition and resolution; are all delivered as well as they are in-house. To do so will require a blend of initiatives and efforts, including:

- Contracts and service–schedules; contractual management Information and KPIs

- Data and information security assurance, including payments (and the news that Marks & Spencer’s recent £300m cyber-attack is being blamed on a 3rd party supplier’s error highlights the criticality of this area)

- The quality assurance and provision of guidance and information to both customers and advisors

- The ability to share and identify customer profiles and features (especially vulnerability factors)

- Advisor training and coaching

- The provision of self-serve and assisted support tools and concessionary measures

(and all of these are an ongoing commitment, not just a ‘one time fix’)

In Summary

Managing complex customer supply chains can be tricky at the best of times, but adding in a raft of demanding regulatory expectations and requirements makes it more difficult still.

Have you already met this challenge or are you still assessing how to better go about it? Let us know. Get in touch, we’d love to chat.

Identifying and supporting vulnerable customers – such as those experiencing financial difficulties, health issues, or emotional distress – is crucial for ethical, compliant and effective service delivery.

While the FCA has long taken a leading role in this space, other regulators such as Ofcom and Ofgem have also required vulnerability protections to be in place, with the UK’s Digital Markets, Competition and Consumers Act 2024 (DMCC), which came into effect on 6 April 2025, also widening the concept of vulnerable customers.

With thresholds higher than ever, the risks of not identifying vulnerable customers can be significant. Fines can now be imposed without a court order and at eye-watering levels, with reputational risk a compounding facto, not to mention the impact on vulnerable individuals themselves.

What’s more, with the divergence between UK and EU law, any cross-border businesses need to be even more on their toes in different jurisdictions.

What is the AI doing to detect vulnerability?

With the preceding three use cases essentially laying the groundwork for this kind of analysis and management, AI can identify signs of vulnerability by analysing speech patterns, language cues, and emotional indicators.

Key benefits: Categorisation and risk scoring

Vulnerability is a spectrum, and customers can move in and out of vulnerable states or between risk factors. Detecting this manually, however, is fraught with difficulty.

First, different people have different – and subjective – views on whether a customer may be indicating a vulnerability factor.

Second, the cues can be subtle and therefore challenging to pick up, especially when an agent – as a normal part of their job – is multi-tasking across multiple screens, taking notes and trying to hold a conversation at the same time.

But the AI is far less likely to miss those cues, because it isn’t distracted, isn’t having a bad or busy day, and doesn’t have empathy as an emotion. Any AI empathy is trained in data, and consequently consistently applied.

Record accuracy

As with use case 1, the use of AI enhances note taking and record-keeping by transcribing and summarising the call automatically. This avoids any temptation to rush the process, and risk non-compliance, while allowing the agent to focus solely on the customer’s needs.

Compliance alerts

While you could employ this kind of analysis in-flight, where prevention is almost always more desirable than cure, even a post-call analysis allows for flagging of potentially vulnerable customers and pro-active outbound or other management of that customer. retrospectively, and still gain some of the benefit, the nature of the regulatory and legal environment makes a real-time approach more desirable, with a prevention rather than cure approach.

Real-time vulnerability detection

The ultimate deployment of real-time detection during a call allows agents to adjust their approach on the fly. And for a true ‘belt and braces’ approach, if a risk score is exceeded, this can be flagged as a ‘red alert’ to the agent, very clearly instructing them not to sell, to do a welfare check, or provide relevant support information.

All of which not only manages the risk to individuals and the business, but empowers agents with the confidence to handle sensitive situations effectively and retains consumer trust through a commitment to their wellbeing that can also foster loyalty.

Implementation Considerations

Again, systems integration and data privacy are key factors in implementation, especially around matters of data usage and consent. As is training and embedding belief in the AI.

But where in other use cases it may be that the cost (or perceived cost) and complexity (or perceived complexity) of implementation of the AI project make the decision more difficult, in this instance, the potential of the AI is less about an ROI against cost than it is about ROI against the potential cost of those eye-watering fines if getting it wrong.

Measuring Success

Here, measurement may be a little trickier, depending upon how well you are able to understand the current baseline. Consider that manual QA is based on only 1-2% of calls, there could be whole swathes of risk going undetected.

The ideal situation is that there is nothing to measure. No issues, no incidents.

However, you can look at measures such as customer feedback from vulnerable customers, the numbers of interventions such as welfare or information provided, and adherence to regulations, particularly if using retrospectively rather than real time.

But the real benefits come from what doesn’t happen, rather than what does. In summary, they are:

- More frequent and consistent identification of customer vulnerability

- More accurate records

- More confidence in your compliance

- Less perceived risk within the business

Using AI to identify vulnerable customers enables contact centres not only to improve on consumer duty and meet the right ethical standards with empathetic and responsible service, it hugely decreases the risk of the worst possible outcome (from a business viability perspective) of an unexpected knock on the door from the regulator and/or widespread bad press.

To find out more about how CCP can help you make the right technology choices, read more here or get in touch.

This series of articles is drawn from our webinar with Jimmy Hosang, CEO and co-founder at Mojo CX. We explored seven key use cases for AI in contact centres, starting from the easiest productivity gains to value generating applications. You can find a summary of all seven use cases here, or watch the webinar in full here.

Moving up the value chain of AI use cases, consistent and effective agent coaching is a vital to the performance of contact centres. From areas that are critical to risk management, such as in regulatory compliance, or value-driving in improving customer experience and brand perception.

Traditionally, coaching relies on the same small samples and manual evaluations as QA (per use case 2), which inevitably means observations are sporadic and opportunities for improvement missed.

What is the AI doing in Auto Coaching?

Auto Coaching harnesses artificial intelligence to analyse agent interactions. It ‘listens’ to the conversation to identify areas of strength and opportunities for development. These data-driven insights then inform an AI-generated, individual agent coaching plan. Providing their coach with the tools to cater to individual agent needs and foster continuous improvement.

Key benefits: Efficiency

When you consider that 60-80% of a team leaders’ time is spent gathering information (Mojo time and motion studies) from the likes of Excel or Power BI to knit together a story of performance – to bring together call data, performance stats and behavioural insights into one place – and deliver coaching sessions, it’s easy to see the benefit of AI taking on this task.

Not just in efficiency, where it is possible to shift from a 1:12 or 1:15 manager to agent ratio to closer to 1:18 without losing effectiveness, but increasing team leader job satisfaction. Where they feel that more of the work they do is making a difference. As with previous use cases, how you take this efficiency benefit is a choice. Either in headcount reduction, or in delivering more coaching – which in turn drives customer experience improvement, or redeploying resources to more strategic tasks elsewhere.

Coaching quality and consistency

What’s more, each coaching conversation will itself improve in quality, because the feedback is based on and prioritised by a much greater data sample both for individual agents and the agent population as a whole. It also ensures agents receive consistency in their feedback, not just in one-to-one manager/agent relationships, but again all managers across the whole contact centre are delivering the same messages on the same coaching points to improve the quality of interactions overall. Which means opportunities are no longer missed, and the opportunity cost diminishes, while also improving customer experience.

An example of this, particularly when integrated with other speech analytics and the QA scorecard of use case 2, could be for offshore contact centres, where the agents speak the language of their customers, but colloquialisms, dialect, accent, vocabulary, fluency, speech pacing or cultural differences result in misunderstandings or frustrations.

Personalised rapid development

With AI in the mix, you no longer need to wait to deliver coaching on specific issues. Or hope that you’ve picked up the key ones from the samples you have when reviewing manually, because the AI is dedicated to finding them on a daily basis. Meaning coaching points for individual agents can also be delivered in real-time, or near-real time depending upon implementation.

The consequence of this targeted, personalised rapid development is that team leaders are able to have the right coaching conversation in the right moment – or even that the agent can ‘self-coach’. Coaching becomes both more efficient and more effective. Agents develop more rapidly, picking up development points as they occur, not days later when it’s easier to have forgotten it (or perpetuated bad habits) and in bite sized pieces, making the feedback more digestible and memorable. Their job satisfaction is improved though faster progression and the business wins through better customer service, better selling or more impactful risk management.

Automated role play

A further step in the development of this use case is the potential for AI to synthesise customer calls for training at varying levels of complexity. Either to pick up systemic issues within the whole operation, or to pick up specific agent needs on the job, or as part of the grad bay, which can then itself also be automated in the analysis and scoring of agent responses, per use case 2.

Here we see real driving both efficiency and effectiveness throughout the contact centre. And again, by providing agents with confidence in a safe environment, other KPIs such as attrition can be positively impacted.

Implementation Considerations

As with all other AI use cases, integrations and data privacy are key considerations. But in this case, it’s important to consider your accuracy thresholds for the AI, and how you will test for accuracy so that team leaders are confident in the AI’s ability to deliver. Furthermore, you will need to educate team leaders and agents on how to use AI-generated feedback. Always think ‘Human-in-the-loop’ (HITL) to ensure coaching is still accompanied by all of the empathy necessary to make it successful.

Measuring Success

For this use case, consider monitoring agent metrics such as first-contact resolution, number of coaching points and CSAT as a measure of coaching effectiveness on a one-to-one basis, measures of coaching preparation time or manager/agent ratios as measures of effectiveness. Then more broadly consider agent retention rates as a measure of higher satisfaction and reduced turnover.

In summary, the key benefits are:

• Significant reduction of the 60-80% of time leader spent preparing coaching

• Shift of manager/agent ratio from c. 1:12 to 1:18

• Higher job satisfaction and reduced attrition among agents

• Higher job satisfaction among team leaders

• Improved CSAT and brand perceptions as service improves across the board

As we move up the value-chain, the AI does get more difficult to implement. However the payoff also tends to get bigger too. Auto Coaching can be considered a strategic investment in agent development, to foster a culture of continuous improvement, that leads to enhanced performance and customer satisfaction.

To find out more about how CCP can help you make the right technology choices, read more here or get in touch.

This series of articles is drawn from our webinar with Jimmy Hosang, CEO and co-founder at Mojo CX. We explored seven key use cases for AI in contact centres, starting from the easiest productivity gains to value generating applications. You can find a summary of all seven use cases here, or watch the webinar in full here.

Quality assurance (QA) is a staple of every contact centre, more so where compliance and regulation demand it. Traditionally, manual QA reviews are concerned with the customer interaction itself, are labour-intensive and typically cover only 1-2% of calls.

While manual QA will pick up some training points, through a lack of comprehensive coverage, it often misses systemic issues that haven’t become immediately obvious elsewhere in the organisation but that could be found buried in call analysis.

What is the AI doing in Auto QA?

Auto QA uses artificial intelligence to automate the evaluation of both customer interactions through transcription (remember use case 1 – autowrap) and sentiment analysis, and what the agent did on systems.

Let’s examine the benefits.

Key benefits: Comprehensive coverage

With AI, it is possible to cover 100% of interactions; to fully assess agent performance consistently and at scale across all interactions and all areas of the QA scorecard, and send alerts straight to a team leader’s desktop.

Resource optimisation

With manual QA, you typically see around a 1:30 or 1:50 ratio of manual QA people to agents. But with Auto QA, you can expect around a 75% reduction in that overhead. Which is significant when working on fine margins, either in headcount reduction, or redirecting those resources to transformation or speech analysis tasks as opposed to data gathering.

Consistent evaluations

As with any human task, while we may believe all QA people are using their scorecard and delivering in the same way, even with calibration sessions and financial incentives, the chances of that being the case are slim; you may already know this from those calibration sessions. Indeed, the interpretation of the calibration itself may be flawed – for example, two different people may have very different takes on what constitutes empathy.

So while an AI scorecard evaluation of a voice interaction may, for example, only be 80% accurate to begin with, it is consistently 80% accurate, as opposed to the potential for human analysis to vary significantly and most likely sit at a lower accuracy figure of around 65%. Meaning more calls are scored at greater accuracy overall.

Real-time feedback

Finally, the benefits of real-time feedback while softer, are easy to understand. And completely measurable via the scorecard.

First, immediately picking up training points allows the agent to implement improvements on the very next interaction.

And second, for an agent taking hundreds of calls a day, picking up a training point even a few hours after the call occurred – especially if the interaction reason or resolution is atypical – makes it harder for the improvement points to stick, even with the benefit of the call to hand.

Implementation considerations

Aside from systems integrations, data privacy and compliance – and instead focusing more on the vagaries, of AI – accuracy (or lack of it) immediately translates through to an impact on human resources, where a less accurate AI could result in wasting resources on issues that aren’t issues.

Which is why it is always desirable to ensure there are humans in the loop (HITL), both in training, developing and refining the AI models, or in the process of checking its conclusions before delivering feedback.

With a combination of human review and machine learning improvements, the 80% accuracy figure can be improved to 85-90% accuracy in around four weeks, at which point you can consider pointing the human resources to different tasks. For systems interactions, including chat, you would expect greater accuracy from the AI from the outset, as it immediately has controlled data to assess.

If you can achieve 95-100% accuracy, per Mojo CX’s claims, then you can be confident human resources are targeted to where they are needed most. It may even be that you are willing to accept a lower rate of accuracy if the QA benefits outweigh the wastage. This is a decision unique to your business. And so as with use case 1, it’s important to understand the true baseline that the AI is improving upon.

Elsewhere, you may choose not to assess 100% of calls for processing and ESG reasons. These are all tolerances and optimisations that you can test and set to deliver against competing KPIs.

Measuring Auto QA success

For any AI implementation, it’s important to measure its success as this will build the case for future implementations. Whether that’s headcount, resource allocation QA KPIs or any of the many other contact centre KPIs.

In summary, the benefits are:

· 75% reduction in QA processing time

· 50-100 x increase in evaluated interactions

· 15-25% increase in evaluation accuracy and consistency

· Greater and faster improvement in agent performance and CSAT

While undoubtedly a little more complex to implement than use case 1, implementing Auto QA builds on those foundations by making use of call transcription and taking it to the next level.

To find out more about how CCP can help you make the right technology choices, read more here or get in touch.

This series of articles is drawn from our webinar with Jimmy Hosang, CEO and co-founder at Mojo CX. We explored seven key use cases for AI in contact centres, starting from the easiest productivity gains to value generating applications. You can find a summary of all seven use cases here, or watch the webinar in full here.

Summarising calls takes time – anywhere from 10-30% of the call. And agents are almost always under pressure to get the task completed in as little time as is humanly possible to meet AHT and wait targets. This often translates to errors or even missing data. Which not only makes it hard for future agents to follow the story, it can be a regulatory challenge too.

AI-driven autowrap and summarisation tools are helping to alleviate this burden by automating the process, allowing businesses to cut handling times and improve CRM accuracy. According to Jimmy, it’s one of the easiest applications of AI a contact centre can implement.

What is the AI doing in autowrap?

Autowrap and summarisation technology uses natural language processing (NLP) and machine learning to transcribe customer calls in real time. As calls progress, key details such as issues raised, resolutions, and next steps are captured automatically. This eliminates the need for agents to manually document call details, both reducing errors and freeing up time for more customer-centric tasks.

Key Benefits: Time and Cost Savings

By reducing the time spent on manual transcription, businesses can lower wrap times by 50%, which translates to reducing handling times by 5-15%. For a contact centre with 200 agents, taking the mid-point of 10%, this could result in a reduction of up to 20 FTEs, and delivering a 2-3X ROI from day one.

How you take this benefit is then your choice:

a) A productivity gain, even through natural attrition

b) A service improvement by reducing wait times or improving service, with longer call times to allow for better first contact resolution

c) Reinvest in more value driving AI use cases to build maturity

Call Summary Accuracy

With manual transcription, there is always the risk of errors or omissions. AI-driven solutions eliminate these risks by automatically capturing the most relevant data from each conversation, improving both the consistency and quality of CRM records.

Increased accuracy has a number of benefits, whether you run a regulated business or not. First is in future contacts, whether you met a first contact resolution goal or not. Any future calls where a customer refers to a previous call – and reasonably expects there to be some level of ‘corporate memory’ – can be shortened by avoid any lengthy re-explanations of what has gone before. Not only does this provide a future productivity gain, it makes for a far better customer experience too. So even at use case 1, we are already facilitating value generation through slick customer processes that avoid typical customer frustrations, as well as productivity.

What’s more, the data is clean, reliable and available for future analysis and QA. Look out for an article on use case 2, Auto QA, for more on that subject.

When building a business case, these are important considerations; it’s important to remember that your baseline probably isn’t perfection. And so your quality uplift may be greater than you have otherwise anticipated.

Easy Integration: No Overhaul Required

While it is undeniably desirable to integrate Autowrap technology into CRM or policy admin systems, it’s not a pre-requisite to start making these gains. An agent – dubbed the ultimate API in our recent whitepaper– can easily check through the summary, make any necessary amendments if you require it (your benchmark of what is good enough will depend on your business) and copy and paste it in. They’re already used to connecting disparate systems and will be working where you want to capture it anyway.

This means that businesses can buck the trend of AI project failure and quickly adopt the technology with minimal disruption to existing workflows. Once the ‘short, sharp’ solution is working, of course you can consider and implement the deep integrations to automate the task, but you will be most of the way there without it.

Enhancing Agent Experience and Customer Outcomes

As alluded to earlier, the benefits aren’t just about reducing operational costs—they also enhance both the agent and customer experience. By automating mundane, and often poorly executed tasks like call transcription, agents are free to focus on more valuable work, such as problem-solving and building customer relationships.

This not only boosts job satisfaction – which in itself may then also translate to tenure, sickness and recruitment gains – it also contributes to higher-quality customer interactions. Look out for use case 5, ‘Agent Assist’ for more on this topic.

Measuring Success

For any AI implementation, it’s important to measure its success as this will build the case for future implementations. Whether that’s headcount, resource allocation or the gamut of other contact centre KPIs.

In summary, the benefits are:

1. Immediate productivity gains of c. 10% of agent all handling time

2. Improved accuracy of note taking

3. Customer satisfaction gains from better corporate memory and more attentive agents

4. More time available for valuable conversations

5. Employee satisfaction gains – happier agents, longer tenures, less sickness, reduced recruitment

6. Regulatory compliance improvements

7. Easy and scalable implementation to shorten implementation timescales and increase AI success

8. Ability to re-invest gains in building AI maturity

Ultimately, accurate (enough) autowrap is an obvious win in any contact centre.

To find out more about how CCP can help you make the right technology choices, read more here or get in touch.

This series of articles is drawn from our webinar with Jimmy Hosang, CEO and co-founder at Mojo CX. We explored seven key use cases for AI in contact centres, starting from the easiest productivity gains to value generating applications. You can find a summary of all seven use cases here, or watch the webinar in full here.

AI in the contact centre is no longer a question of if, but where to begin. In our recent webinar with Jimmy Hosang, CEO and Co-founder of Mojo CX, we explored seven practical, high-impact AI use cases that are already delivering returns in real-world operations. From automating wrap-up notes to exploring full voice AI, the conversation cut through the hype to focus on what’s truly working – and what’s coming next.

From productivity savings – and easy wins – to value generation, here we summarise each of the seven use cases, their benefits, pitfalls, and what it takes to make them work.

1. Autowrap / Call Summarisation

This is one of the most immediate and measurable wins for AI – and it’s relevant to every contact centre, whether procedural or regulatory. With AI transcribing and summarising calls, wrap time is reduced by 50% and average handling times by 5–15%. In a 200-seat contact centre, at 10%, that’s equivalent to freeing up 20 full-time agents.

It’s an easy sell for operations leaders: the 2-3X ROI is immediate, the data is clean (and doesn’t need complex integrations, a simple copy/paste will do to start), and the impact on agent workload is obvious. What you do with the benefit is up to you; save the 20 FTE through natural attrition, reduce wait times, improve service. Less typing, less admin, more time for real conversations.

2. Auto QA (Quality Assurance)

Manual QA processes typically only cover 1-2% of calls. With AI-powered auto QA, every conversation can be transcribed and assessed, increasing both coverage and scorecard accuracy, with the potential to reduce QA overhead by 75%. Once the model reaches high accuracy (which can be achieved in four weeks or less), it enables a rethinking of QA resourcing. Where teams can reinvest those hours into value-adding activities like deep-dive analysis or real-time speech insights.

What’s more evaluation consistency is likely to see an immediate uplift, as is agent performance through real time feedback.

3. Auto Coaching

Team leaders spend 60-80% of their buried in fragmented data or playing detective to understand performance issues. Auto coaching can bring together call data, performance stats, and behavioural insights into one view – streamlining prep time and allowing leaders to focus on actual coaching.

From an efficiency perspective, this facilitates a shift in manager-to-agent ratios from 1:12 or 1:15 to something closer to 1:18 without losing effectiveness. But beyond that, coaching quality and consistency improve and agent development is more pointed and expedited. It also unlocks the potential for automated role play both on the job and in grad bays. This provides the basis then for both greater job satisfaction among both managers and agents, as well as delivering higher quality interactions throughout the operation. All of which have an impact on broader measures such as agent attrition, CSAT and brand perception.

SIDE NOTE: While those first three use cases focus a lot on the potential for reduction in headcount, it’s often more about doing better work, not just less work. Think: HITL (Human in the Loop), not human out of the picture.

4. Identifying Vulnerable Customers

This is where AI starts playing a key role in risk management and regulatory compliance. Agents can’t always be relied upon to spot vulnerability signals in real time – especially when they’re under pressure to do many things at one in a short space of time. AI can listen in and flag when it detects signs of vulnerability, alerting the agent in the moment and ensuring the right customer journey is followed.

The benefit? Reduced regulatory risk, better outcomes for vulnerable customers, and more confidence in compliance reporting. This use case also pairs naturally with summarisation – capturing the right context and actions in the CRM.

5. Agent Assist

Beyond risk management and efficiency, AI also enables agents to add value in the moment. Agent Assist tools analyse the live conversation and suggest actions – whether it’s handling a low-value enquiry quickly, spotting a sales opportunity, or guiding a customer toward a better outcome.

This is where things get exciting. AI is no longer just reducing cost – it’s helping unlock customer lifetime value and improving journeys. It’s also a mindset shift: from cost centre to value driver.

SIDE NOTE: The constant push for self-serve may well be eroding brand loyalty, where a great conversation with an agent isn’t only about making a sale or solving a query, it’s an experience that plays into customer brand perception.

6. Hands-Free Conversations

Imagine an agent who doesn’t have to type, click around systems, or juggle tabs – just talk and listen. That’s the promise of hands-free conversations. With AI handling navigation, form filling, and admin tasks, agents can give customers their full attention.

It’s not just about productivity, it’s about truly human interactions that focus solely on the customer. How satisfying would that be? It could change the type of people you hire and shift expectations around what great service looks like.

7. Full Voice AI

Everyone’s chasing the holy grail: fully autonomous AI voice agents. Why? 24/7 customer contact, instant routing, and scalable service without scaling headcount.

But Jimmy’s message was clear – don’t rush it, though do keep your eyes on the prize. Build your maturity and path to value with easier use cases, underpinned by the right data and processes. This isn’t about flipping a switch – it’s about a journey to transformation.

Final Thoughts: Think “value first, tech second”

Across every use case, the AI you deploy is about outcomes. Whether that’s saving time and cost savings, improving job satisfaction or deepening customer relationships, AI only succeeds when it’s introduced with purpose.

Start small. Pick the use case with the clearest ROI. And don’t be afraid to move fast – but move smart.